Are you thinking about becoming a professional media server operator or user, but aren't sure which skills you need? This is blog number five in the series, and this time we're shining a light on 3D files, formats and projection mapping

These posts are based on recommendations from the fine people of the Media Server Professionals (Facebook) group.

-

- Introduction to 3D

- Texturing

- Rendering

- UV mapping

- 3D stereoscopy

- Projection mapping

- Conclusion

Just to be clear… This article is NOT going to teach you to understand, use and master 3D applications, such as 3D Studio Max, Maya, Blender, Cinema 4D, etc. But I will try to provide you with some basic understanding of 3D and especially how many media servers use UV mapping techniques for playback of content using 3D models.

3D models and UV mapping are used to map pixels perfectly onto any kind of device, whether it’s a specially designed LED wall, projection mapping on a physical object or in some cases DMX fixtures. Some media server systems also support 3D stages, where you can import the entire venue and map the content directly onto the stage, for previsualization and project development purposes.

Introduction to 3D

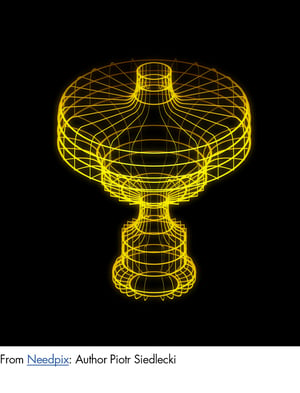

Three-dimensional (3D) models represent an object, characters or scenes created by a collection of points in a 3D space, connected by polygon meshes, lines, curves, etc. The example shows a 3D model (wireframe) object with different shapes.

3D is basically used everywhere, from illustrations in printed materials, objects on web pages, entire animated movies or content for media server playback.

Here's an example of objects, to illustrate the concept of 3D space.

The work process of creating a complete 3D object, stage or scene can be broken down into the following tasks:

The work process of creating a complete 3D object, stage or scene can be broken down into the following tasks:

- Modelling

- Animation

- Texturing

- Rendering

First you create your object or stage, and there are many ways to achieve that with techniques such as spline or patch modelling, box modelling and poly modelling. There are dozens of resources online to learn about the differences.

If you want to create an object that moves or performs something, you add animation. This can be achieved in different ways:

- Keyframes, where you animate frame by frame.

- Splines (paths and curves, where you want your object to move)

- Importing tracking data/motion capture data, from body suits, etc.

- Use the built-in physics engine in the 3D animation software

Texturing

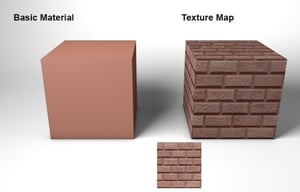

Texture mapping is when you apply an image or even physical texture, without interfering with the 3D object’s mesh at all. A great example is to be found at Digital Learning Center.

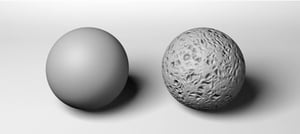

The image examples show here are from that page, and to the left in the first image, you have a 3D object without any texture mapped to it.

Bump mapping is a technique to add physical texture to an object without adding geometry-mesh-data. Bump maps will never be as good and detailed as a real change to the 3D object, and you can see from the example here that the shape to the right still has a perfect surface, while it gives the impression of something else.

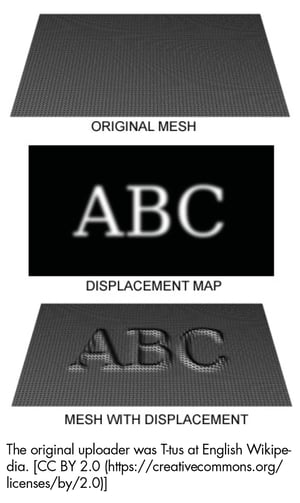

Displacement mapping is a technique to create an effect where the geometric position of points on the textured surface/object are displaced.

In many cases, when importing 3D models into a media server, you can use the media server itself to perform the texturing. This is done through UV mapping – which will be covered later in this article.

Rendering

The final stage is to render your object or stage. Here you place your camera (viewpoint) and light source(s). This is a critical stage and will have a great impact on shadows, reflections, and the like, in the final image.

Here is an example of a fully rendered model with lights, etc.

Now it is time to home in on an aspect that we need to fully understand when we work with 3D objects in media servers: UV mapping.

UV Mapping

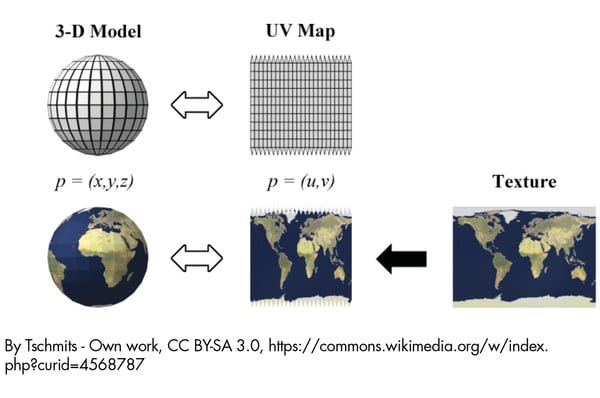

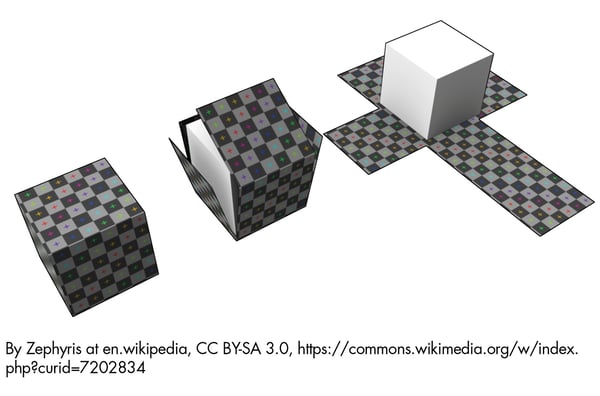

UV mapping gets its name from the axis in a 2D texture or texture coordinates. XYZ are already used by the three axes in 3D. U is the horizontal axis and V the vertical. UV mapping is the process of projecting (“mapping”) a 2D image onto a 3D model’s surface as described earlier in Texturing.

3D models brought into the media server must have texture, such as coordinates (UV), assigned in the 3D application beforehand. This is necessary in order to be able to apply the texture images correctly onto the 3D object.

See two examples from Wiki, where one is a representation of the world, and the other is a cube.

3D stereoscopy

3D stereoscopy is a completely different thing as it does not really have anything to do with 3D files and 3D models directly, but is the technique to see “real” depth in an image. It does it by presenting two different images to the brain where the images are slightly offset – creating an impression of depth. To achieve this, you need a set of glasses that will allow your eyes to see only what is intended for each eye – the brain merges these images and we experience depth.

If you want to read more about 3D and why it flopped so massively in the TV and at-home market, have a look at a previous blog post on the topic.

You’ll find 3D stereoscopic displays implemented in a wide range of of applications ranging from movies, scientific visualisation, computer-aided design, remote medical surgery, and, of course entertainment (theme parks, etc).

There’s more than one way to cook an egg and so is it with playback of 3D stereoscopic content. Some system setups require two separate channels fed to the 3D display device (one for left and one for right eye), while other systems requires that you feed both left/right in the same channel. In the latter case you are often recommended to have 120Hz output allowing the display to separate frames at 60Hz (to avoid flickering).

The displays in use will have its preference and a media server should be able to play back at least one of the two methods described above.

Projection Mapping

The term projection mapping explains what it is all about: It is projection and it is mapping of the projected image onto an object, a building, a car, etc. Projection mapping can be a simple indoor stage effect or larger-than-life visuals on entire arenas, landmark buildings or other architectural landscapes. The interest in projection mapping has grown tremendously over the last years and according to a report, the projection mapping market accounted for more than a billion USD in 2017 with a forecast of future growth of 21% per year until 2023.

Projection mapping has found its way into mainstream events and not without reason. It can transform an otherwise boring arena or building into a spectacular live event, perfect for:

- Brand-building – it can generate a lot of publicity

- Audience engagement – mapping attracts attention and adds the wow factor

- Creating memorable moments – a perfect feature to ensure your event is remembered

- Social reach – mapping is perfect for social media sharing, with great potential for going viral

We have covered projection mapping in earlier blog posts (check out the list below!) as well as in The Marketers' Guide to Projection Mapping, but if we relate projection mapping to the realm of 3D, you can see the following example from the award-winning Dataton booth. In the example, Dataton had a physical object on a rotating stand, tracked the rotation and mapped projector content on to the object’s physical features.

This is only possible because the 3D model was imported into the media server and UV mapping was used to map content onto the object.

Conclusion

That rounds off my overview of content for media server playback. In the next instalment, we’ll move over to the dark side of the industry – the display side. Projectors, LED walls, screens… See you soon! 😎

Before wrapping up I want to thank the following people who contributed with great ideas in the forum post:

Patrick Campbell, Ian McClain, Ola Fredenlund, Matt Ardine, Marek Papke, Eric Gazzillo, Axel Sundbotten, Joe Bleasdale, Parker Langvardt, Alex Mysterio Mueller, Christopher John Bolton, Andy Bates, David Gillett, Charlie Cooper, Tom Bass, Fred Lang, Nhoj Yelnif, Hugh Davies-Webb, Marcus Bayer, Arran Vj-Air, Manny Conde , Joel Adria, Alex Oliszewski, Ruben Laine, Jan Huewel, Majid Younis, Ernst Ziller, Marco Pastuovic, Geoffrey Platt, Ted Pallas, Dale Rehbein, Michael Kohler, Joe Dunkley, John Bulver, Jack Banks, Stuart McGowan, Todd Neville Scrutchfield

More blogs on mapping:

Five reasons to map a builiding